2000+ successful projects with 1000+ satisfied clients

![]()

Your winning idea is super secure with our NDA

Artificial Intelligence has made the most amazing changes in the way human beings function. An AI is working underneath every social/non-social attribute. It is responsible or erasing all the monotonous tasks out there. Look at how Googlebot works, it makes sure the spam emails end up in the spam section when you are searching for something over google it gives you the most relevant outcomes and so on. If we talk about social media bots like Facebook or Twitter, we can’t help but admit they are doing a good job.

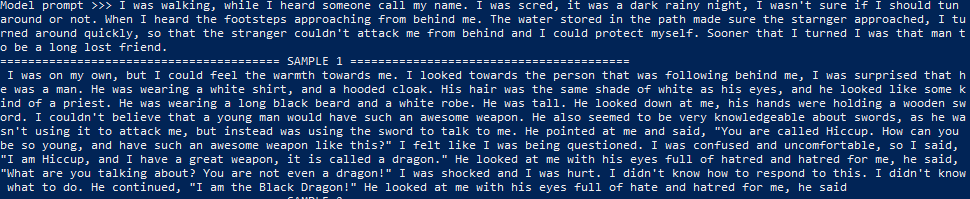

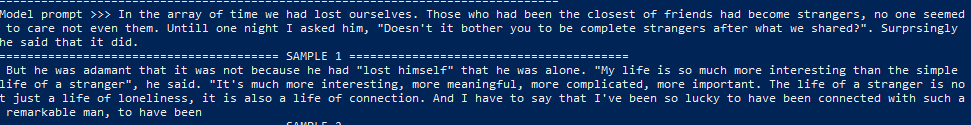

On Facebook, we can see the face recognition suggesting our friend’s profiles when we upload a photo. In the age of Artificial Narrow Intelligence, the admissible, outcomes of the AI performance have already ‘awestruck’ us and now with the release of OpenAI GPT-2, be certain to be amazed by what OpenAI GPT-2 can do. We at Vyrazu Labs, have used OpenAI GPT-2 to understand how it works and How to Use OpenAI GPT-2 for custom needs. While doing this experiment we learned a lot about OpenAI GPT-2 AI text generator. This helped us to generate texts that were most relevant, for the given command.

As much OpenAI GPT-2 amazes us, it also tickles our curious minds. When we started the study for making it one of the most powerful devices, we found the problems as well as the benefits of using it. Come, let us look into the research we did, which will help you to make good use of it, for concerns like generating texts from a single command. I can say all these with such an enchanting charm, as I have personally used it. However, we are still unable to use it in our blogs, as it needs fine-tuning and digging deep into the system’s logic. For actually utilizing it in the way we all want to.

This is the first time that a machine has ever been able to understand a sentence that has been written, in whole or in part, by humans and the implications for the future are truly staggering. Well, all thanks to Natural Language Processing(NLP), which has fantastically made its place in the past few years.

Using this technology in various ways GTP2 AI text generator has been able to:

Language modeling(LM) is the most important element of modern NLP. A language model as a probabilistic model that takes words/character pairs and predicts the next word or character. It is the first step in developing a language model. The LM is based on the notion of a “language model” and can be used to improve the accuracy of L2 and L1 models.

LM has been developed with the goal of improving the accuracy of machine translation. While L1 is a useful tool that can change a command into text and L2 is the output of L1, there is no guarantee that the results will be the same. It is possible for the LM to improve the reply and generate a text completely different from the previous one.

GTP-2 is the former Generative Pre-trained Transformer(GPT), the original NLP framework developed by OpenAI. The complete GPT2 AI text generator comprises of 1.5 billion parameters. In GTP, in which the parameter could be measured as 10 times less than that of OpenAI GPT-2. This model contains data from 8 million websites selected from the outgoing links on Reddit.

Git clone this repository, and cd into the directory for remaining commands

Then, follow the instructions for either native or Docker installation.

All steps can optionally be done in a virtual environment using tools such as virtualenv or conda.

Install TensorFlow 1.12 (with GPU support, if you have a GPU and want everything to run faster)

or

Install other python packages:

Download the model data

Build the Dockerfile and tag the created image as gpt-2:

Start an interactive bash session from the gpt-2 docker image.

You can opt to use the flag if you have access to an NVIDIA GPU and a valid install of nvidia-docker 2.0.

WARNING: Samples are unfiltered and may contain offensive content.

Some of the examples below may include Unicode text characters. Set the environment variable:

to override the standard stream settings in UTF-8 mode.

To generate unconditional samples from the small model:

There are various flags for controlling the samples:

To check flag descriptions, use:

To give the model custom prompts, you can use:

To check flag descriptions, use:

This data was taken from the GitHub repository.

OpenAI GPT-2 research was supported by the National Science Foundation, the U.S. Air Force Research Laboratory, the National Science Foundation, the National Institute of Standards and Technology, and the MIT AI Lab. The MIT AI Lab is part of the MIT Media Lab, a joint initiative between MIT and the Massachusetts Institute of Technology in Cambridge, aimed at creating powerful, open-source tools for scientists, engineers, and educators to use in their research.

The first word of a new sentence would be a random token generated by OpenAI GPT-2. The next step is to calculate the number of tokens needed to finish the sentence. Then calculate the number of words needed to complete a sentence. GPT2 AI text generator does this for us, which is the most complex part. The next step is to generate the text. OpenAI GPT-2 generates text from the data. We have to tell them what our goal is. Our goal is to generate sentences with the provided length in the code.

OpenAI GPT-2 has a feature called a token. This token contains information about different topics. This token is used to check if the sentence is about the topic, declared by the user. The next step is to choose the token in question. Then we tell GPT2 AI text generator what the goal is and the algorithm does the rest. There are a lot of steps involved in OpenAI GPT-2. The program needs to be tested on hundreds of thousands of sentences. This approach is taken just to understand how GPT-2 generates the replies depending on the sentences the users feed it.

OpenAI has released four versions of the GPT2 AI text generator models. Among them are Small: 124M model, with a 500MB on Disk. The Medium: 355M parameter model has 1.5GB on Disk and the large: 774M model has 3GB on Disk. The recent one being the XL: 1558M model the 1.5billion parameter model with around 6GB on Disk. There are many AI tutorials over the internet, but these models are much larger than any existing model. This is also a model that can not be employed easily. The 124M model limits the GPU’s memory when the user is fine-tuning it.

The 355M model needs external training techniques before it can be fine-tuned without running out of server space. The last model will not be fine-tuned as the present server GUPs might go OOM, for which it provides a command that will put you back on the system. The real architecture of OpenAI GPT-2 can’t be grasped easily, hence, this explanation will help you to get to the roots of the structure. We can draw a resemblance of the BlackBox, that takes inputs and gives outputs with GPT2 AI text generator.

As the generators in the past, the input is an array of tokens and the outputs are the probable token that will appear next. These probabilities act as the anchor for the AI for picking up the next token in the provided array. Here, both the data(the input and the output) are byte pair encodings, in lieu of using the character or word tokens. Like the other Recurrent Neural Network approaches, the inputs are compressed to the shortest combination of bytes including formatting/case, this can be treated as a settlement between the two approaches.

However, in the unfortunate events, it produces random lengths in the final output. This means if you want an output of 100 words it might end up giving you 63 words. The encoded data is later converted to a decoded text for human beings to understand the same. As it has been trained using numerous blogs it’s grip over the English language is extraordinary. It allows it’s internal education to transfer to other datasets and perform exceptionally with a minimum amount of data fine-tuning.

As it is inclined towards the English Language, in the internal dataset. Languages with no Latin characters like Russian, Indian languages, and other such languages will not be able to finetune without making errors. If you choose to work with OpenAI GPT-2, using the 124M model should give the best outcome. As it has equity of speed, creativity, and the size. If you have huge amounts of data for training then you must go for the 335M model which will give you the best results.

For making apps with GPT2 AI text generator we have to look at the apps that already exist. Among these apps is Adam King’s Talk to Transformer which is based on the 1.5B model after the release on 5th November. And the TabNine, uses OpenAI GPT-2 fine-tuned on GitHub code for creating a probabilistic code completion. The PyTorch side, Huggingface has released a Transformer client(w/ GPT-2 support) for their own. They have also created apps like Write With Transformer. To serve as a text auto-completion AI tutorial.

For showing how to deploy a small model to a web service by using the Flask application framework. OpenAI GPT-2 is also used in the Raspberry Pi 2, but the project is still in its very early stages. The core idea is that the GPT2 AI text generator can be used to build hardware that doesn’t use any custom components. This is a great way to reduce the cost of hardware, and can also be used to build a low cost, open-source computing platform. There is already an app that is present in the Google Play store, which has been performing like a star.

This star is generating impressive revenue for further development of this tool. I think it is going to be very hard for the existing apps to compete as the current revenue will only be generated by the new apps that will be created. The news apps will be using AI and machine learning for advanced performance and functionality. There are a few other apps that are available on the Playstore that will be able to use the GPT2 AI text generator to create their own custom codes. We will look at these apps in a future blog post.

It is a great example of how easy it is to write an application that can be deployed into the web service, and it is very easy to build a code completion bot to do it for us. So we are now getting back to the same question that we raise out of curiosity, which is: How to use GPT-2? Well, first of all, we want to have a way to run the OpenAI GPT-2 code in our app without using the OpenAI GPT-2 API, which is why a project called is ongoing OpenAI GPT-2-Code-Runner.

This project has been in the works for a long time. A lot of time has been spent on the code-runner and it is finally released. The project is open source and you can use it once you install it. The code-runner runs the code and it will run it on the target machine. The code-runner is written in Python and it uses the Flask framework to run the code on the target machine. The code runs on all platforms that Flask supports.

The code-runner was created with the idea to have a web server with a REST API to run the code on the target machine. The code-runner is a Python script that uses a couple of external libraries to generate the code for us. The OpenAI GPT-2 code is generated by the Python code-runner, gpt.py. The OpenAI GPT-2 code is a wrapper on top of the GPT code. The OpenAI GPT-2 code implements some of the functionality in GPT-1 code.

The problems that the future will face with intelligent AI like OpenAI GPT-2, are not hard to explain. After all, we have already gathered many examples from “fiction” and “real-life” issues, concerning human and AI bonds. The biggest problem that humankind is looking at right now is for a human to have the perception that they are in love with OpenAI GPT-2 like AI.

Recently, I read an article on Reddit, where the author u/levonbinsh posted to the r/mediaSynthesis explaining their feelings for OpenAI’s neural network. In this article, the user, explains how the auto-generated texts have filled their void that developed from not having a partner. This person goes on explaining about the replies that OpenAI GPT-2 has given to their text command.

This might be a misconception or a publicity stunt. However, this is a concerning matter, for those who are really lonely and are looking for a companion might think that they can have a future with an AI. This is just a small example of the problems that are about to arise for the future. The future will be filled with problems like these.

Of course, we can avoid creating AI that is not intelligent, as a solution to these problems. This is not an easy task, because there are so many different kinds of AI. One of the most prominent examples is the AlphaGo program. What is the most significant thing about AlphaGo? Well, it is a program that achieved the highest level of intelligence in the world.

The program was able to complete the game of Go and beat the world champion of Chinese Go, Lee Sedol. That is a really impressive feat for a program that only has one input parameter, the computer’s position. It is a feat that is already considered as a miracle. This Google AI called AlphaGo was enough to silence the crowd.

It is a method that is being used by a neural network. A neural network is a computer program that has been trained on a training set. It is a program that is able to recognize patterns in data and then generate the output that is expected. This system is designed with an input parameter that is defined as the most important one, and an output parameter that is used as a feedback parameter. In other words, it is a system that can learn from the data it is given and can generate a new training set based on that.

The era of advancements has shown many colors. OpenAI GPT-2 has put an exclamation mark on all our blogs. This has only been possible for its outstanding performance. Being known as the dangerous tool that can’t be released to being the tool that will draw a line in the process of content generation is tough. But, OpenAI GPT-2 made it to the finals and is performing like a pro.

OpenAI GPT-2 can be used in so many different ways, once it is trained, that all the fields might be benefited from this tool. When OpenAI GPT-2’s news first came out, we all held our breath, waiting for its final release. In November, the dream came true. The final model was out and everyone was excited, for this day when we will see an AI doing wonders. This will not only help the IT industry.

It will help the entire mankind once everyone properly recognizes it and it is trained in different ways for making different kinds of use. I hope you enjoyed reading this article and have found the information that you were looking for. If you think I might have missed out on something mention it in the comments section or leave feedback. We can have a heated discussion on this topic then.

If you are looking forward to making an app or web service that will feature OpenAI GPT-2 contact us. We are one of the leading software companies in India. There is nothing that we can’t develop, while we make sure that we have constant communication with our clients. This helps us to make the development without making any mistakes whatsoever. I am looking forward to meeting you here, talking about AI development.

Vyrazu Labs, a global leader in the area of robust digital product development

Please fill the form below.

2000+ successful projects with 1000+ satisfied clients

![]()

Your winning idea is super secure with our NDA

For sales queries, call us at:

If you’ve got powerful skills, we’ll pay your bills. Contact our HR at:

Vyrazu Labs, a global leader in the area of robust digital product development

Please fill the form below.

2000+ successful projects with 1000+ satisfied clients

![]()

Your winning idea is super secure with our NDA

Vyrazu Labs, a global leader in the area of robust digital product development

Please fill the form below.

2000+ successful projects with 1000+ satisfied clients

Your winning idea is super secure with our NDA

For sales queries, call us at:

If you’ve got powerful skills, we’ll pay your bills. Contact our HR at: